GSA SER Link Lists

Understanding GSA SER Link Lists

In the world of automated SEO, few tools command as much respect—and controversy—as GSA Search Engine Ranker. Central to leveraging this powerful software effectively is the intelligent use of GSA SER link lists. These lists are the fuel that powers your campaigns, dictating the diversity, authority, and safety of your backlink profile. A well-curated link list can propel your rankings, while a poor one can lead to algorithmic penalties and wasted resources. This guide explores every facet of GSA SER link lists, from what they contain to how to build and maintain them for long-term success.

What Exactly Are GSA SER Link Lists?

A GSA SER link list is a structured collection of target URLs where the software will attempt to create backlinks. Unlike a simple list of domains, a true GSA SER link list is engineered to work specifically with the platform’s verification engine. Each entry typically includes the full submission URL, the platform type identifier (such as WordPress, Joomla, or a generic blog comment script), and often a footprint to help GSA identify similar opportunities on the fly. These lists can range from a few hundred hand-picked endpoints to massive databases containing millions of pre-analyzed targets.

The Anatomy of an Effective List

Not all link lists are created equal. A robust GSA SER link list file usually comes in a .txt, .csv, or a proprietary format that retains metadata. The most critical columns include the target URL, the platform engine, the PR or authority metric (if known), and the contextual category. Some advanced lists even embed anchor text suggestions or login credentials for platforms that might be behind a simple registration wall. The granularity of this data determines how efficiently GSA can post without tripping its internal spam filters.

Why Quality Over Quantity Dominates

Early GSA strategies often pushed for sheer volume—spray millions of links and hope for an algorithmic break. Modern search engines, however, have become hyper-adept at sniffing out low-value web 2.0 shells and auto-approved comment sections. Today, the smartest SEOs rely on meticulously verified GSA SER link lists that prioritize sites with genuine traffic, real moderation, and preferably a history of being indexed. A list of 10,000 high-quality, hard-to-find guestbook and article directory targets can easily outperform a bloated 2-million URL list filled with dead domains and instant delete platforms.

Key Metrics to Look For

When evaluating any GSA SER link list, consider the following attributes. First, the outbound link ratio—sites that already have hundreds of outbound links on a page will pass negligible juice. Second, the indexation rate: if the page itself isn’t in Google’s index, your link won’t be discovered. Third, the platform freshness: self-hosted WordPress blogs might be auto-updated, making old footprints useless. Finally, check for de-indexation patterns across entire subnets, a clear sign of a penalized network.

Building Your Own Custom Link Lists

Relying solely on publicly shared or purchased lists is a risky game, as thousands of other users might be targeting the exact same URLs. Creating a website proprietary GSA SER link list gives you a competitive moat. The process begins with advanced footprint scraping using operators like “powered by wordpress†“leave a reply†inurl:blog combined with industry-specific keywords. You then feed these scraped URLs into a verification tool that simulates GSA’s posting engine, weeding out failures, captcha walls, and redirects automatically.

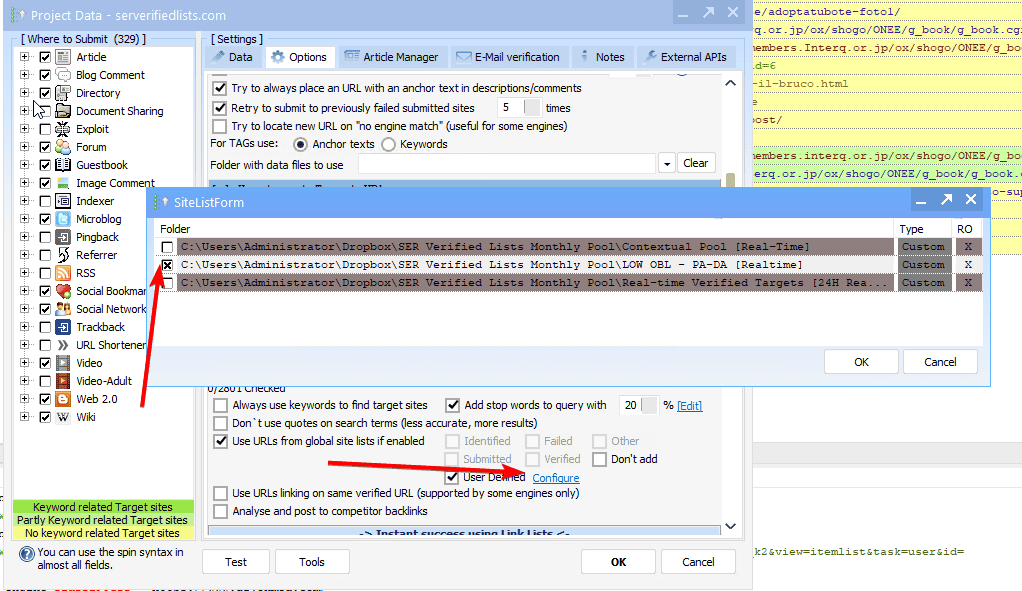

Using Verified Lists for Tiered Link Building

Tiered link building remains the most popular architecture for GSA SER. Your highest quality, editorially placed links form Tier 1 and point directly at your money site. GSA SER link lists come into play heavily at Tier 2 and Tier 3, where you want massive amounts of supportive link power without risking your main domain. For Tier 2, select mid-quality lists with contextual blogs and article sites. For Tier 3, you can open the floodgates with larger, more generic lists, as the risk is absorbed by the buffer tiers. Contextual relevance filters built into your project settings ensure even these lower tiers maintain some topical alignment.

Daily Maintenance and List Decay

The half-life of a GSA SER link list is shockingly short. Blog owners delete pages, forums close registrations, and entire subdomains go offline. A list that boasted an 80% success rate last month might drop to 20% today. Smart users set up automatic duplicate checks and schedule weekly re-verification runs. Some enterprise solutions use machine learning to predict which targets will die based on age, IP range, and historical decay, pruning them before they waste submission attempts. Always keep a live freshness score for every segment of your list.

Integrating Proxies and Captcha Solvers

A stellar link list is useless without a robust technical backend. Private proxies prevent IP bans across the millions of submission attempts your list demands. Pair your GSA SER link lists with a rotating pool of high-anonymity dedicated proxies, and configure an automatic captcha-solving service. Some lists are pre-tagged with captcha complexity; you can route simple numeric captchas to a less expensive solver, reserving premium OCR and human-like solving for the high-value targets. This cost stratification significantly raises the ROI of any list you deploy.

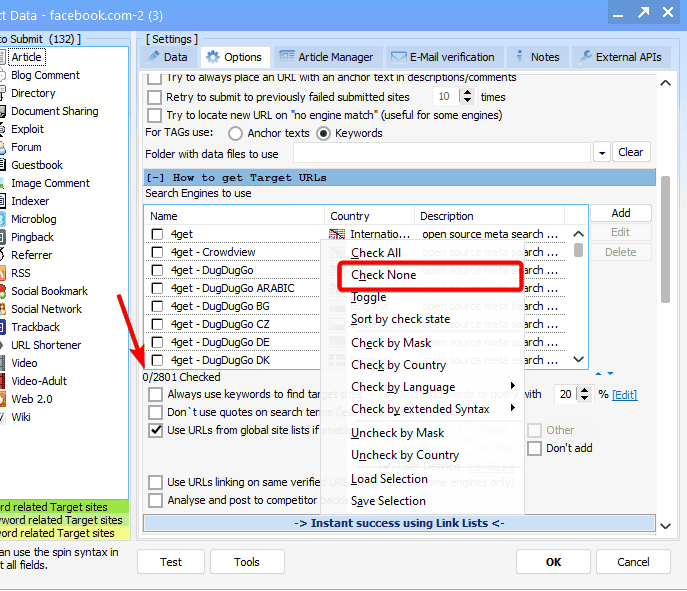

Avoiding Footprints and Penalties

Google’s algorithms are exceptionally good at detecting automated backlink patterns. Using the same link list as everyone else creates a massive, detectable footprint. To mitigate this, blend multiple lists and randomize the order. More importantly, inject your own found targets regularly. Use the platform’s “site lists†feature coupled with random comments, spun anchor texts, and a variety of author names. Diversifying your GSA SER link list sources to include niche forums, Edu blogs, and industry-specific directories also disperses the link graph pattern, mimicking organic link growth.

Where to Source Reliable Lists

While building from scratch is ideal, curated providers do exist. Look for vendors who update their GSA SER link lists weekly and provide sample files to test. Membership sites often include categorized lists: high-PR, DoFollow, English-only, and platform-specific (like Elgg or PHPFox). Always cross-reference any purchased list against a blacklist checker and remove outright spam neighborhoods before loading them into your campaign. A community-verified list with transparent updating logs almost always trumps a cheap, static dump of unknown origin.

The Future of Automated Link Lists

As search engines evolve, so must the humble link list. We are moving toward context-aware lists where each URL is not just a target but a data packet containing semantic relevance scores, entity associations, and expected dwell time. GSA SER’s ongoing updates are already supporting more sophisticated post templates that can manipulate these variables. The next generation of GSA SER link lists will likely be hybrid, combining classic URL targets with API endpoints for programmatic platforms, ensuring that automated SEO remains one step ahead of manual-only crawling patterns.